No alert fires. No system throws an error. The model confabulates, and the organization relies on it. Most organizations assume they are reviewing AI outputs. In practice, many are reading confident text without asking whether a single sentence is accurate.

This is the hallucination problem. For internal audit functions serious about providing assurance over AI-dependent processes, it is one of the most underexamined risks sitting inside organizations right now. Courts have issued sanctions. Regulators have begun to formalize expectations. Boards are starting to ask questions that many internal audit functions cannot yet answer.

What Are AI Hallucinations?

An AI hallucination is not one thing. It takes at least three distinct forms, and internal auditors who treat them as interchangeable will design controls that miss the very failures they are meant to catch.

See Part One: Model Drift: When AI Models Lie and What Internal Audit Must Do about It

Consider a generative AI tool used by the legal team for regulatory research, contract review, and compliance memo drafting. Three distinct failure types can occur:

- Factual hallucination occurs when the model states something that is simply wrong. The AI produces a regulatory threshold, a case citation, or a policy requirement that does not exist. The output reads fluently and with authority. For example, the AI may claim that “FINTRAC requires a 15 percent variance threshold,” even though no such requirement exists.

- Citation hallucination occurs when the model references a source that cannot be found or doesn’t exist. A legal case, a regulatory document, or an internal report that either does not exist or does not say what the model claims. For example, the AI may cite “Smith v. Canada, 2019 SCC 44,” but no such Supreme Court case exists.

- Reasoning hallucination occurs when the logic fails invisibly. The inputs and outputs both look coherent, but the AI has reached a conclusion that does not follow from the facts. For example, a regulation requires reporting when transactions exceed $10,000 in aggregate. The client makes two $6,000 transactions on the same day. The AI may conclude that no reporting is required because each transaction is below $10,000, even though the rule clearly requires aggregation.

What makes all three forms dangerous is that they are indistinguishable from accurate work without independent verification. A hallucination does not look like an error. It looks like competent output. That is precisely what makes it an internal audit issue.

Why this Is an Internal Audit Issue

Some audit functions still treat AI output quality as the responsibility of technology teams or the business units deploying the tools. That instinct is understandable but misplaced. Technology teams can confirm that a model runs. They cannot confirm that its outputs are true. Business units can operationalize AI within their processes, but they are not always equipped to detect when outputs are fabricated or when reasoning silently drifts from underlying facts.

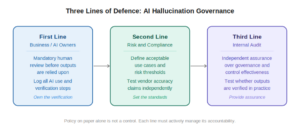

In practice, control ownership is not always cleanly divided. The first line often designs and operates controls within AI enabled processes, especially where tools are embedded in business workflows. The second line including risk, compliance, and quality assurance may define standards, challenge control design, and in some cases perform independent testing. Roles vary by organization, and in many cases, responsibilities overlap.

However, internal audit remains the only function explicitly mandated to provide independent assurance on whether these controls are appropriately designed and operating effectively.

Internal audit is not responsible for detecting hallucinations directly. Its role is more fundamental. It must determine whether the organization has effectively closed the governance gap between the model runs and the output is true. In many organizations, that gap remains wide and largely untested.

The consequences of leaving this gap unaddressed are tangible. Organizations face regulatory sanctions for relying on fictitious legal authority, reputational damage when fabricated content reaches clients or courts, financial decisions based on invented figures, and procurement actions driven by AI summaries that misrepresent contractual reality.

These are not technology failures. They are governance failures. And governance is core internal audit territory.

Real Cases: When AI Hallucinations Go Undetected

The following are matters of public record. They show how unverified AI output produced material legal and reputational consequences:

- Mata v. Avianca, Inc. (S.D.N.Y. 2023) A plaintiff’s attorney used ChatGPT to research a personal injury claim. The tool produced a brief containing six cases that did not exist, complete with invented case numbers, fabricated judicial quotations, and false citations. Judge P. Kevin Castel sanctioned both attorneys $5,000. This case established hallucination as a recognized professional liability risk.

- Coomer v. Lindell. (D. Colo. 2025) In this high‑profile defamation action, defense attorneys filed an opposition brief containing nearly 30 defective citations and factual inaccuracies traceable to generative AI. Although the firm had an internal AI policy, Judge Nina Y. Wang found it ineffective because it lacked training, verification requirements, and supervisory review. The court imposed $3,000 sanctions on each attorney under Federal Rule of Civil Procedure 11, emphasizing that a written policy without implementation does not satisfy professional obligations.

- Johnson v. Dunn (N.D. Ala. 2025) A major law firm had issued explicit AI guidance restricting unsupervised use. A practice group co-leader submitted a brief with fabricated citations regardless. The court found monetary sanctions insufficient and disqualified the attorneys entirely, published the opinion in the Federal Supplement, and referred the matter to state bar regulators.

Across all three cases, the failure was not that AI tools were used. It was that outputs were accepted without verification, and no control existed to catch the gap before it caused harm.

The Hidden Layer Beneath Hallucination

Hallucinations gets most of the attention because they are obvious once someone checks the output. But there is a quieter, more dangerous risk underneath. Even when the AI produces clean and confident work with no made-up facts or errors, the foundation it is built on may never have been properly confirmed.

Take a private equity firm using AI to review portfolio company financials. The model correctly applies debt covenants and flags potential breaches. Everything in the output looks perfect. Yet the underlying transaction data was only internally reconciled. It was never independently confirmed against bank statements or original loan agreements. The AI gave a reliable looking answer on shaky ground.

This is the difference between reconciled data and confirmed data. Many teams stop once the numbers match inside their system. They assume consistency equals accuracy. The structure feeding the AI, including balances, relationships, and transaction details, may contain errors that no one has verified from the source.

An AI tool can reason perfectly over flawed inputs and still produce output that passes normal review. The logic holds and no facts are invented, but the conclusion is wrong because the base was never solid.

Internal audit must look beyond the model’s tendency to confabulate. It also needs to check whether the organization confirms the structural foundation before data even reaches the AI. Without that step, even the best output verification becomes meaningless.

The Governance Framework: Three Lines for AI Hallucination

Effective governance of hallucination risk requires clear accountability across all three lines of defense. Without it, the risk sits in the space between technology ownership, business deployment, and risk oversight.

The most common finding in this space is not that the organization lacks policies. It is that policies exist on paper while the operational controls that would catch a hallucinated output are absent, untested, or not enforced.

What an Audit of Hallucination Controls Actually Looks Like

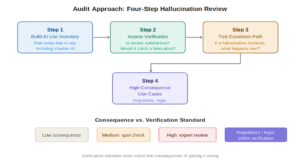

Auditors do not need to become AI specialists. They need a clear approach for assessing whether controls exist and whether those controls work in practice.

Start With the AI Use Inventory

The first question is whether the organization knows what AI tools are in active use across the business, and in which decisions their outputs play a role. In many organizations, inventories are incomplete, outdated, or fragmented across business lines. Shadow AI, including tools accessed through personal subscriptions or consumer‑grade interfaces, is a major blind spot.

Internal auditors should test the inventory for completeness by checking it against technology asset registers, vendor contracts, expense reports, and business process documentation. Any AI tool whose outputs feed into decisions or external communications without appearing in the inventory is an uncontrolled exposure and a governance failure.

Assess the Verification Framework

For each AI use case in scope, a verification framework should exist. At a minimum: a defined verification step is required before AI output is relied upon; the person performing the review has enough subject-matter knowledge to detect an error; the outcome is documented; and accountability is clearly assigned.

An AI-generated compliance memo reviewed by someone who does not know the regulation is not a controlled output. An AI citation checked by someone without access to a legal database has not been verified. The question is not whether someone looked at the output. It is whether the review would have caught a hallucination.

Assess the Data Foundation and Source‑of‑Truth Integrity

Hallucination controls alone are not sufficient. For each use case, internal audit must also determine whether the data and documents feeding the AI have been independently confirmed before the model is allowed to reason over them.

A reconciled dataset is not a confirmed dataset, and an AI system can produce fluent, confident, and entirely wrong conclusions when the underlying foundation has never been validated against source evidence. Internal audit should also confirm that the organization can trace this data back to an authoritative source, with clear lineage showing how it was created, transformed, and approved before reaching the AI. If the source of truth is weak or lineage is unclear, the AI may reason correctly over inputs that were never reliable to begin with. The question is not only whether the organization verifies the AI’s visible output, but whether it has confirmed the inputs and the origin of those inputs that make that output appear reliable in the first place. Where this confirmation and lineage step is missing, the organization operates with a structural governance failure beneath the hallucination itself.

Test the Escalation Path

Internal auditors should trace the end‑to‑end path that would follow a hypothetical hallucination discovery: who is notified, what actions they are expected to take, the required response timescale, who is accountable for remediation, and whether decisions are formally documented. If this escalation path is informal, inconsistent, or unclear, it constitutes a finding.

Attention should be paid to whether hallucination incidents are logged. An organization that has experienced multiple hallucinations but maintains no record of them has a material governance gap: without incident logging, patterns cannot be identified, controls cannot be strengthened, and management cannot demonstrate oversight.

Examine High-Consequence Use Cases Separately

Organizations with legal, regulatory, or fiduciary obligations need to apply verification standards that match the stakes. Auditors should confirm that high consequence use cases are identified, classified, and subject to controls that reflect the potential harm of an undetected hallucination.

Vendor and Third-Party AI Tools

Stanford’s RegLab found that legal AI tools from major providers produced wrong or poorly supported answers more than 17 percent of the time, despite vendor claims of near‑zero error rates. The gap between what vendors claim and how these tools perform on real tasks is itself an audit finding waiting to happen.

Many organizations rely on AI tools they did not build and cannot inspect. Document drafting platforms, legal research tools, contract analysis systems, and compliance monitoring products are built into core processes across every sector.

Internal auditors should check whether vendor contracts include obligations around output accuracy and hallucination disclosure, whether the organization has done its own independent testing before deployment, and whether ongoing monitoring of vendor AI outputs is being done with the same care applied to internally built tools.

Rising Regulatory Expectations and Standards-Based Expectations

Regulators now expect organizations to manage hallucination risk as part of standard AI governance. Key frameworks include:

- EU AI Act (2024/1689): Governance and transparency obligations for general-purpose AI models became applicable from August 2025 under the Act’s phased implementation.

- NIST AI RMF Generative AI Profile (AI-600-1, July 2024): Identifies hallucinations as one of twelve distinct generative AI risk categories, recommending formal risk management and mitigation approaches.

- ISO/IEC 42001: Establishes requirements for continuous monitoring of AI system performance, including output accuracy. Certification is supported by BS ISO/IEC 42006:2025, which establishes competence requirements for certification bodies and auditors.

- IIA Standards (2024): Reinforce technology governance and emerging technology risks, including AI, as core internal audit responsibilities.

- ABA Formal Opinion 512 (2024): Clarifies that lawyers using generative AI have a professional responsibility to understand its limitations and verify outputs before relying on them.

The Finding that Matters Most

Internal audit functions face two high-impact AI risks that operate in parallel. The first is hallucination, where the system produces fabricated or incorrect outputs that can directly mislead financial reporting, customer decisions, regulatory submissions, or operational processes. The second is the foundation risk, where organizations rely on AI outputs without confirming the underlying data or maintaining visibility into how the systems are being used. One risk is visible. The other is silent. Both can cause harm.

Organizations that manage these risks well treat them as business controls, not technology problems. They assign clear accountability at the level of each use case. They verify the accuracy of AI generated outputs and confirm the data foundations that feed them. And they elevate AI output quality and data confirmation as standing governance items, reported alongside data quality, model performance, and operational risk.

The real risk is not simply that AI systems hallucinate. They do, and they will continue to. The real risk is that organizations deploy these systems in consequential processes without the governance needed to detect fabrications and without the controls needed to confirm the underlying data foundations before they cause harm.

Internal audit’s role is to give the board confidence that the organization can verify AI generated outputs where it matters, confirm the structural foundation that feeds those outputs, escalate appropriately when a hallucination is found, and address issues before they reach customers, regulators, or the board itself.

A mature internal audit function treats hallucination risk and foundation risk as parallel, high impact exposures requiring sustained oversight, clear accountability, proportionate verification controls, and regular independent review.

AI tools will confabulate. AI governance doesn’t have to. ![]()

Nirpendra Ajmera is a Chief Audit Executive at an Electrical Utility Company focused on modernizing audit, controls, and AI‑related risk oversight.