There is a particular kind of risk that keeps model risk officers up at night. It is the risk of a model that keeps running, keeps producing outputs, keeps feeding decisions into the business, while quietly becoming wrong. No alert fires. No dashboard turns red. The model simply drifts, and the organization drifts with it. Most organizations assume they are monitoring model performance. In reality, many are monitoring dashboards rather than underlying risk.

Among the biggest of these risks is AI model drift. For internal audit functions that are serious about providing assurance over technology and data-dependent processes, it represents one of the most underexamined risks sitting inside organizations right now. Regulators are paying attention. Courts have started awarding damages. And Boards are beginning to ask questions that many internal audit functions are not yet equipped to answer.

What Model Drift Actually Means

Model drift is not one phenomenon. It has at least three distinct forms, and internal auditors who treat them as interchangeable will miss the very signals they are meant to detect. The simplest way to understand the difference is to anchor all three to a single example: a credit card fraud detection model trained on transaction data from 2018 to 2019, still running in 2026.

See Part Two: AI Hallucinations: When Your Models Start Making Things Up

Data drift occurs when the inputs change. After the pandemic, customers shifted heavily toward e-commerce, contactless payments, and subscription services. The model was trained on a world where in-store purchases dominated. The logic has not changed; the data feeding the model has.

Concept drift occurs when the meaning changes. Suppose the model learned that late-night transactions at unfamiliar merchants are strong fraud signals. That was true in 2019. By 2026, round‑the‑clock food delivery, ride‑sharing, and online marketplaces make those same transactions routine. The inputs look familiar, but their relationship to fraud has shifted.

Output drift occurs when the model’s behaviour changes even if inputs look stable. For example, if the fraud model suddenly flags far more transactions as high risk than it did a year ago, that change in output distribution is a warning sign and often the earliest indicator of a problem.

What makes all three forms dangerous is that they tend to degrade performance gradually. There is rarely a moment of obvious failure. Accuracy erodes by a percentage point here, a percentage point there, until the cumulative effect on business outcomes becomes large enough to attract attention—often months after it should have.

Why This Is an Internal Audit Issue

Some internal audit functions treat model risk as the exclusive territory of model risk management or data science teams. That instinct is understandable but mistaken.

Model risk management focuses on development and initial validation, while ongoing monitoring and governance often fall into a gap between the model team, the business, and IT. Internal audit is well positioned to assess whether that gap has been closed.

The consequences of undetected drift can be material. The U.K. Financial Conduct Authority’s multi‑firm reviews of algorithmic systems have highlighted weak post‑deployment monitoring, unclear ownership, and outdated governance as substantial risks. These are governance failures, not technical ones, and governance is core internal audit territory.

Real-World Cases: When Model Drift Goes Unmanaged

The following cases are matters of public record. They illustrate how undetected model drift, meaning changes in data, relationships, or real-world conditions, can degrade model performance and lead to material business, regulatory, or operational consequences.

- Zillow Offers Collapse (2021): Pricing Model Drift: Zillow’s home pricing algorithm began systematically overestimating property values as post pandemic housing conditions shifted. Result: business line shut down; $881 million loss.

- Google Flu Trends (2014): Concept Drift: Google Flu Trends overestimated flu activity for two seasons because search behavior shifted. Media attention and changing online habits broke the link between search terms and real flu cases.

- COVID Era Credit Risk Models (2020 to 2021): Data and Concept Drift– Credit risk models across global banks became unreliable almost overnight when borrower behavior, income stability, and macroeconomic indicators shifted during COVID. Supervisors in multiple jurisdictions acknowledged industry wide drift and issued guidance for rapid recalibration.

In several instances, the issue was not the absence of monitoring tools, but the absence of clear ownership and escalation.

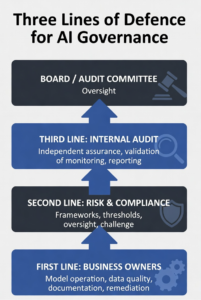

The Governance Framework: Three Lines for AI

Effective governance of AI model drift requires clear accountability across all three lines.

In practice, responsibilities should be clearly defined:

- First line (Business / Model Owners): Monitor performance, detect drift, initiate response.

- Second line (Risk / Model Validation): Challenge assumptions, validate monitoring frameworks, and define thresholds.

- Third line (Internal Audit): Provide independent assurance over governance, monitoring, and escalation.

The diagram below sets out how responsibilities should be distributed.

Figure 1: The Three Lines Model applied to AI governance and model drift oversight

As illustrated in Figure 1, accountability must be clearly defined across all three lines.

What an Audit of Drift Controls Actually Looks Like

Auditors do not need to become data scientists. They need a clear framework for assessing whether controls exist and are operating effectively.

Start With the Model Inventory

The most fundamental question is whether the organization knows what models it has running in production. Mature organizations maintain a model inventory that records each model’s purpose, the data it uses, the decisions it informs, its risk classification, and the governance structure around it. In practice, many inventories are incomplete, outdated, or fragmented across business lines. Auditors should test the inventory for completeness by cross-referencing it against technology asset registers, vendor contracts, and business process documentation.

Assess the Monitoring Framework

For each model in scope, defined metrics should be tracked on an ongoing basis. At a minimum, these should cover input feature distributions, output score distributions, and performance metrics where ground truth is available. These metrics should be tied to thresholds that reflect business impact, not just statistical variation.

Auditors should confirm that someone is running these tests, at appropriate frequency, against defined thresholds. The threshold question matters more than it might appear. In practice, thresholds are often set at model deployment and rarely revisited. This creates a control that exists on paper but gradually loses relevance as operating conditions change.

A monitoring dashboard alone is not sufficient if the thresholds that trigger action were set arbitrarily at model launch and have never been revisited. Auditors should assess how thresholds were derived, whether they have been tested against the model’s current operating environment, and who has authority to adjust them.

Two statistical techniques are critical at a conceptual level:

- Population Stability Index (PSI): Widely used to detect data drift. It compares the current distribution of an input variable or score with a baseline. A high PSI indicates the model is seeing a different population than it was trained on.

- Kolmogorov-Smirnov (KS) Test: Commonly used to detect output drift. It compares distributions of model scores from different periods.

Internal auditors should confirm that such tests are being applied and that results are being reviewed.

Test the Escalation Path

Monitoring is only as valuable as the response it triggers. One of the most common failures in AI governance is the existence of monitoring infrastructure that generates alerts that nobody acts on. Auditors should trace the escalation path from a hypothetical drift alert: who receives it, what they are expected to do, what the timescale for response is, who signs off on decisions to retrain or retire a model, and whether that decision-making is documented. If the escalation path is informal, undocumented, or unclear about accountability, that is a finding.

Examine Fairness Monitoring Separately

Performance drift and fairness drift do not always move together. A model’s overall accuracy can remain stable while its error rate for a particular demographic group deteriorates significantly.

Organizations subject to anti‑discrimination regulation, including those in financial services, insurance, employment, and healthcare, need to monitor model outputs across demographic segments on an ongoing basis. Internal auditors should confirm that this monitoring exists, covers relevant protected characteristics, and is reviewed by someone with appropriate authority and expertise.

In many cases, fairness degradation is detected later than performance drift, making it a lagging but high‑impact risk.

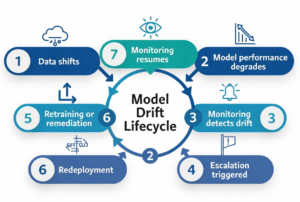

Figure 2: Model drift lifecycle — from training through deployment to detection and remediation

Figure 2 highlights how drift emerges and must be managed across the model lifecycle.

The Governance Gap Most Organizations Have Not Closed

Operational monitoring is one dimension. The governance framework around it is another, and in many organizations, it is the weaker of the two. Effective model drift governance requires a clear policy defining what constitutes a material drift event, a formal retraining and revalidation process with documented approvals, regular board or audit committee reporting on AI model performance, and explicit accountability for model owners distinct from model developers.

A recurring audit finding in this space is the absence of formal model retirement criteria. Organizations are good at launching models; far fewer are disciplined about deciding when a model has drifted too far to remain in production. This is not just a technical omission; it is a governance gap that allows outdated models to operate indefinitely, long after their business value or reliability has eroded.

Vendor and Third-Party Models

Many organizations now rely heavily on models they did not build. Credit scoring models, fraud detection tools, customer segmentation engines, and risk rating systems from third-party vendors are embedded in core business processes across every sector. The governance challenge here is acute, because the organization bears the risk of these models’ outputs while having limited visibility into how they work or how they are maintained.

Auditors should assess whether vendor model agreements include contractual obligations around drift monitoring and notification, whether the organization has conducted its own independent validation of vendor models before deployment, and whether ongoing performance monitoring of vendor models is being conducted with the same rigour applied to internally developed models.

A vendor asserting that their model is performing well is not equivalent to the organization having independent evidence that it is performing adequately in its own operating environment. In practice, over-reliance on vendor assurances is one of the most common blind spots observed in audits of model governance.

Generative AI Has Introduced a New Drift Problem

This challenge is further amplified by the rise of generative AI systems. Large language models introduce additional forms of drift, including prompt drift, retrieval drift in Retrieval‑Augmented Generation (RAG) architectures, and misalignment as real-world knowledge evolves. Traditional monitoring approaches are often insufficient in these contexts, requiring new evaluation and control mechanisms.

Regulators now expect organizations to monitor and manage drift as part of standard AI governance. Audit functions operating in regulated industries should be familiar with the following frameworks and their specific requirements:

- EU AI Act: Requires lifecycle risk controls, data governance, and continuous monitoring for high‑risk AI.

- DORA: Mandates ongoing oversight of technology and algorithmic risks, plus incident reporting.

- California CPRA: Requires explainability for automated decisions and monitoring for discriminatory outcomes.

- ISO/IEC 42001: Sets expectations for identifying AI risks and monitoring operational performance.

- NIST AI RMF: Treats drift management as part of its Measure and Manage functions.

- IIA Standards (2024): Elevate technology governance and model risk assurance as core audit responsibilities.

- Basel / SR 11‑7: Requires continuous model oversight, not one‑time validation, aligned to model materiality.

Across all frameworks, the expectation is clear: drift must be monitored, documented, and addressed. Internal audit functions that cannot demonstrate assurance over this work have a material gap. In many organizations, drift is not unmanaged because it is complex, but because it is not clearly owned.

The Internal Audit Finding that Matters Most

When internal audit functions report on AI model drift, the finding that matters most is rarely technical. It is that senior management and the board lack reliable, recurring visibility into how AI systems are performing in production. That information gap is what allows drift to compound quietly and what enables decisions to continue unchallenged long after a well‑informed board would have intervened.

Organizations that are getting this right share a few traits. They invest in monitoring infrastructure before issues surface. They assign clear, business‑line accountability for model performance rather than leaving it with developers. And they treat AI model risk as a standing governance item, on par with credit or operational risk. Drift is inevitable. Governance failure is not.

The greatest risk is not model drift itself but the absence of clear, timely information reaching senior leadership. Drift is predictable; its risks grow when governance is fragmented or monitoring inconsistent.

Internal audit’s role is to provide the board with confidence that the organization can detect degradation early, escalate appropriately, and remediate before it affects customers, compliance, or strategic outcomes. A high-performing internal audit function treats model drift as a continuous, high‑impact risk requiring sustained oversight, clear accountability, and regular independent validation. ![]()

Nirpendra Ajmera is a Chief Audit Executive at an Electrical Utility Company focused on modernizing audit, controls, and AI‑related risk oversight.